The Dangers of Artificial Mediocrity

How AI might be worse at our jobs, but still take them

By Eirik Holm Gjerstad

Artificial intelligences’ potential to destroy jobs is frequently discussed. However, little attention has been paid to the dangers it poses to the quality of knowledge, art and culture - and to our ability to even ask the questions upon which these are built.

The World of Bookcraft

For culture and the arts, the worst case would not be if artificial intelligences turn out to be qualitatively better than humans and outcompete them, but if they turn out to be worse and still outcompete them. It would not be the first time that a technological breakthrough allowed the qualitatively inferior to outcompete the qualitatively superior. The novelty would be that this now applies to creative work and knowledge production.

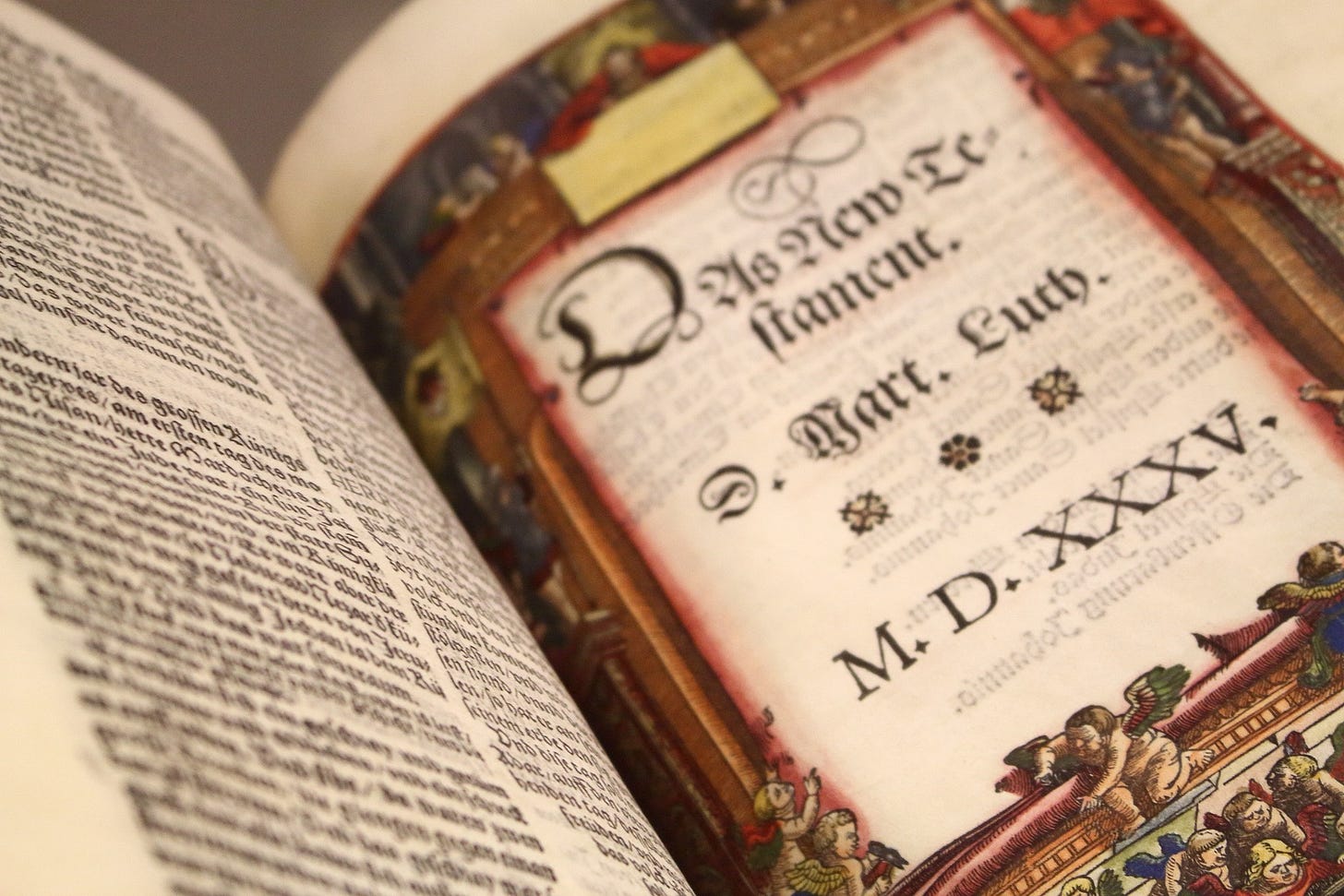

Take the example of bookcraft. The quality of books perhaps peaked in the Middle Ages. A medieval monk might have pointed out to another that a mechanical printing press would never be able to deliver a product that could compare with their handwritten books, where each page was a work of art, carefully crafted, beautifully illustrated, gold-plated, with the human hand's subtly varying and living script, bound in skin from lambs that the monk himself carefully selected for the purpose, and so on. And the monk would not be wrong. Go to The British Library or any other collection of books from the Middle Ages and see for yourself the qualitative superiority. Nevertheless, the mechanical printing press made this craft redundant and outcompeted it. In this case, we consider it an improvement because the individual book's material quality is not as important as its content, and that precisely the content of books became so much more accessible, diverse, and qualitatively better due to a technological revolution.

But this time it may be different because this technological breakthrough concerns what books also contain - or contained. For some high school and university students, artificial intelligences already produce better assignments than they can, and for those for whom this is not yet the case, they are now faced with the choice of whether the difference between a B and an A is worth hundreds of hours of hard and frustrating work with unaided knowledge acquisition. And perhaps the quality of knowledge has already been compromised before these new artificial intelligences are taken into account. High school teachers already report that students have become worse at language, coinciding with Google Translate getting better; that students have become worse at math, even though it has never been easier and more accessible to ignore tedious arithmetic, reduction, and memorization of rules for manipulation of symbols and instead focus on the content and ideas of mathematics, due to tools such as Maple and WolframAlpha. Students differentiate the equation they are given, receive 100% of the points for the answer to the task, but have not understood that what they got from the differentiation was, for example, a body's acceleration.

The availability and efficiency of knowledge tools may already compromise the quality of knowledge. What then when the tools of knowledge face a possible revolution?

The High Cost of Cheap Answers

An example of such a revolution might be the upcoming Microsoft 'copilot' AI, which is expected to be integrated with the Office suite and therefore just as widespread and accessible. In a presentation of this new tool, 'copilot' is asked to provide '3 key trends' from last quarter's sales figures in an Excel spreadsheet, and then create a PowerPoint presentation based on this. What used to take a human days, now takes 'co-pilot' a few seconds. But what exactly is being asked? Is '3 key trends' equivalent to '3 significant trends'? If so, what criterion of significance is being used? What is considered a significant effect varies from field to field in science. With what time and data horizon is a 'trend' measured? Does it look at 'trends' compared to just the last quarter? Last 4 quarters? Compared with other departments of the company? With other companies? Are 'trends' assessed relative to other societal and civilizational developments? Relative to a geological or cosmic time horizon?

The good thing is that with these artificial intelligences, it will probably never have been easier to get the answers to these follow-up questions. Now and in the past, one would have had to ask people these questions, maybe not get an answer, or wait a week until an email arrived, by which time one had long forgotten what the original question and context of the answer was.

However, easy answers do not necessarily prompt difficult questions. On the contrary, there is a danger that with the speed and ease of the answer itself, the consideration of what questions were asked in the first place will disappear.

When the production of answers is streamlined to an unprecedented degree, it may compromise the asker's understanding of what their question even is. As the quality of questions decline, the quality of what is asked for suffers, and the world becomes stupider and worse.

There have never been more sources available to more people to inform themselves politically, historically or scientifically, but have people correspondingly never been more politically, historically or scientifically critical of sources, now that the importance of this has increased? At least the answer is not given, and perhaps the answer is not the same for everyone.

It is possible that this danger to the quality of knowledge, art and culture will not become a reality, and that with the help of artificial intelligence we will become smarter and more knowledgeable than ever before, that the quality of knowledge, art and culture will only improve. I would not even rule out that this fortunate scenario is more likely than the unfortunate one. But the danger and its nature are still not sufficiently understood.

New production methods change the products. The emergence of new building materials changes what and how one builds. For a while, old products are produced in new ways, but over time the products also become new, and not always products of higher quality, if these new ones simply have sufficiently low cost and high quantity.

Therefore, the worst case is not if artificial intelligences turn out to be able to produce better music, images, films, poems, stories, insights in mathematics, physics, chemistry, biology, history, language... but if they turn out not to be able to, yet still outcompete humans.

Eirik Holm Gjerstad is a literary author, IT-Specialist and philosopher, who works in the pharmaceutical industry. In his spare time he is trying to reverse the subjective turn in ontology. If you are interested in more from Eirik, check out his music and his recent book.

If you liked this article, you might also like our post on AI and the social sciences. Subscribe to our feed for more content like this.