How Publication Bias Threatens Science and What We Should Do About It

Publication bias threatens our collective knowledge. Luckily, you can easily assess the problem using readily available statistical tools.

By Adam Finnemann

Don’t underestimate publication bias; it distorts our scientific literature and leads to false beliefs. Publication bias arises when studies reporting statistically significant results are more likely to be published compared to those yielding non-significant outcomes. This can either happen on the journal level if editors reject manuscripts because null findings are uninteresting, or because researchers stop projects midway due to disappointing results.

In my previous post for Unreasonable Doubt I highlighted that prestige and economic incentives have weakened peer review and caused low-quality science to be published, meaning that scientists would need to rely on meta-analysis and other forms of evidence synthesis over individual studies. In this blog, I will explain why this path is made difficult by publication bias. Despite this challenge, there is a silver lining: methods to address publication bias exist, but we need to use them.

Evidence of Publication Bias

Recently, publication bias has caused some controversy in the field of nudging, an approach to policymaking that creates tools to prompt behavior change without curtailing individual freedom. For several decades, it has been the 'golden goose' of behavioral science. It was popularized in 2006 with the release of the best-selling book, "Nudge," by Richard Thaler and Cass Sunstein. This area of study gained additional momentum following the success of Daniel Kahneman's book, "Thinking, Fast and Slow." In his work, Kahneman compellingly challenges the notion of perfect rationality by suggesting that human decisions operate within two distinct systems, the fast which relies on intuitions, and the slow which is thoughtful and deliberate. Nudge techniques exploit the vulnerabilities of these two systems to promote beneficial behaviors, such as increasing organ donation rates, enhancing traffic orderliness, and fostering healthier eating habits. The promising results and potential of these techniques have resulted in substantial funding pouring into the field, giving rise to numerous dedicated research centers. In turn, the resulting research has had significant real-world implications, influencing policy guidance and effecting measurable change.

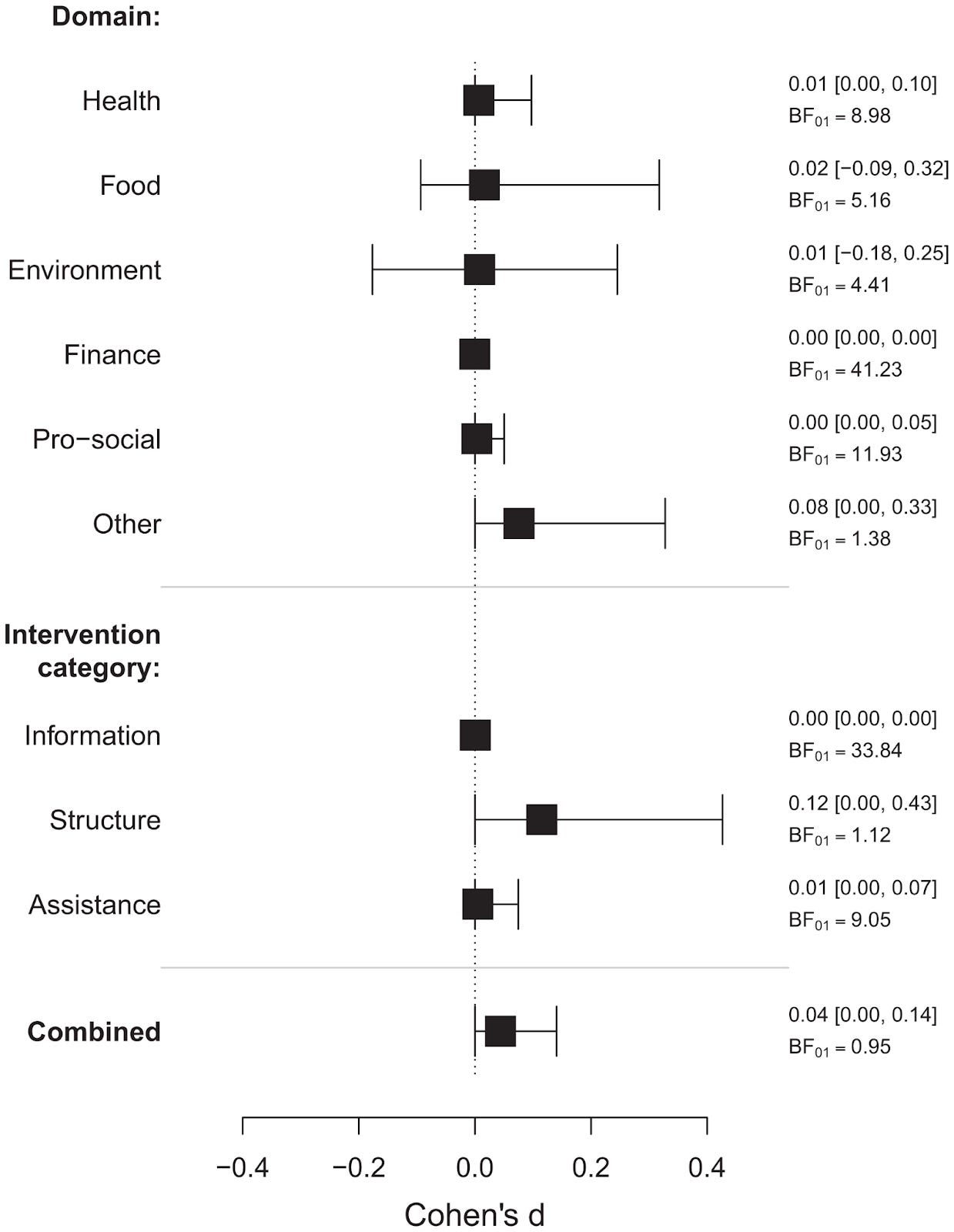

Last year, a comprehensive meta-analysis aimed to assess the general effectiveness of the wide array of techniques encompassed by nudging (Mertens et al., 2022). This analysis revealed a small to medium effect (an effect is typically an observed difference between two groups of individuals or experimental conditions). It also found evidence for publication bias but maintained the primary conclusion that nudging is effective. In response to these findings, Maier et al. (2022) examined the publication bias further using dedicated publication bias adjustment methods (see Textbox 1 further down for brief introduction to publication bias detection and the ROBMA method used in this study). As a result, they proposed that "publication bias in nudging research" would be a more accurate headline for the original article. This conclusion is evident in Figure 1, which depicts the publication bias-adjusted effects of nudging across various domains and interventions. In every instance, a zero effect is included within the confidence interval which means there is no significant effect. Unfortunately, for the so-called 'golden goose' of behavioral science, it appears that nudging is largely a curiosity of the publication system rather than an effect in the real world.

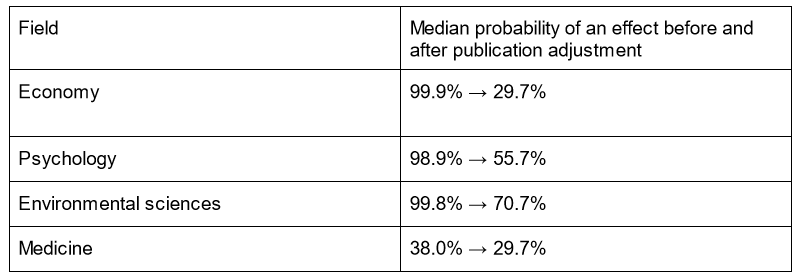

Though it might be one of the more egregious examples, the problem of publication bias is not limited to nudging. Another recent large-scale project examined 700,000 scientific results from 67,000 meta-analyses across medicine, psychology, economics, and environmental sciences. The study analyzed the strength of the empirical evidence before and after adjustment for publication bias. Table 1 shows that in psychology and economy, studies generally concluded that effects were present with around 99% certainty. However, after adjusting for publication bias this certainty decreases to roughly 29.7%. For readers with some statistical background, Appendix 1 offers a more comprehensive and complicated overview of the change in scientific evidence after attempting to adjust for publication bias across the disciplines.

Economy emerges as the discipline most affected by publication bias, followed by psychology and environmental science. Interestingly, the pre-adjusted probabilities for these fields are strikingly similar, whereas their post-adjustment values differ, suggesting varying base rates of correct hypotheses. The positive news is that medicine fares much better, mostly due to a much lower pre-adjustment rate of publication bias. This lower pre-adjusted rate is likely due to mandatory pre-registration and other no-brainer initiatives, that we can only speculate as to why they haven’t made it into the other fields yet…

Ways forward

What can be done about the publication bias? Ìsee two important ways forward. We can try to sweep the existing literature for bias, and strive to build a publication bias-free path forward. In the following, I briefly discuss how to do both.

For anyone relying on existing scientific literature, it is important to do your own publication bias detection and adjustment. Software to do this is relatively accessible, although the theory behind it is less so. For instance, the free and open-access software tool JASP can run a ROBMA analysis. This method fits the most prominent publication bias adjustment models and returns the average adjusted estimate across all the models (ROBMA was also used in the nudging and cross-field example given earlier). Running such a model requires two columns of information that is readily available from most meta-analyses: a list of estimates and a list of uncertainty estimates such standard errors. We give the basic institutions for diagnosing publication bias in the following block. This part is a bit technical and only for the curious or anyone doing their own meta-analysis.

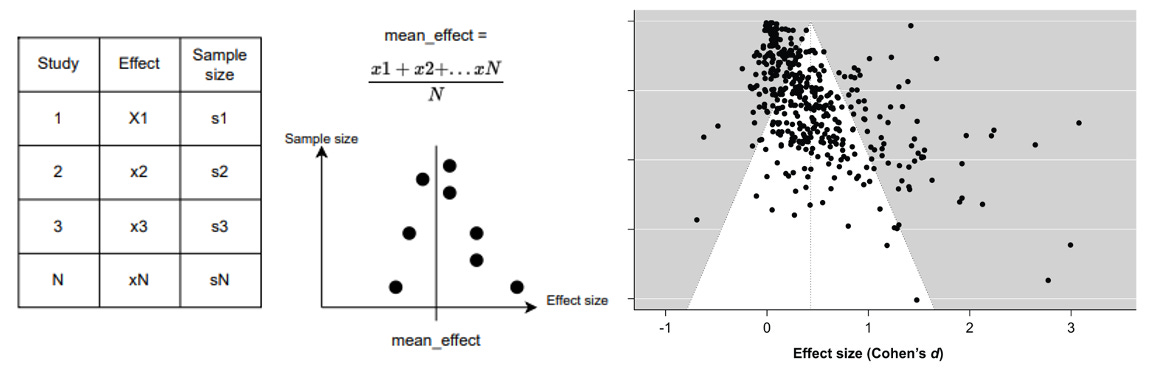

In Panel 1, we present the basic intuition for detecting publication bias. The process starts by collecting effects and sample sizes of N studies. Funnel plots then show the effects against the sample sizes. The middle funnel plot shows the ideal case with no publication bias. The basic intuition is that studies with large samples will be close to the true/mean effect while small studies easily can deviate substantially from the effect. Furthermore, we expect the studies’ results to be symmetrically distributed around the mean effect. To the right, we show the funnel plot from Merten et al (2022) covering 440 effects. The white triangle shows the area we expect to be covered in black dots if there is no publication bias. The black dots do not follow the expected pattern but instead show an asymmetric pattern with many studies finding strong positive effects but no studies finding strong negative effects. This asymmetry reveals publication bias.

After having diagnosed the problem, we can compute a new mean effect that aims to adjust for publication bias - keep in mind the perfect isn't a method but very useful. The open-source free statistical software JASP (akin to SPS but better) can do publication bias adjustment from a list of effects and standard errors. Alternatively, the analyses can be computed using programming languages such as R or Python. One caveat is that there exist numerous methods for adjusting and it’s unclear which one is the best one. We, therefore, recommend using the ROBMA (Robust Bayesian Meta Analysis) package available in JASP or R. It fits a long range of models and returns the average publication bias-adjusted estimate across all the models.

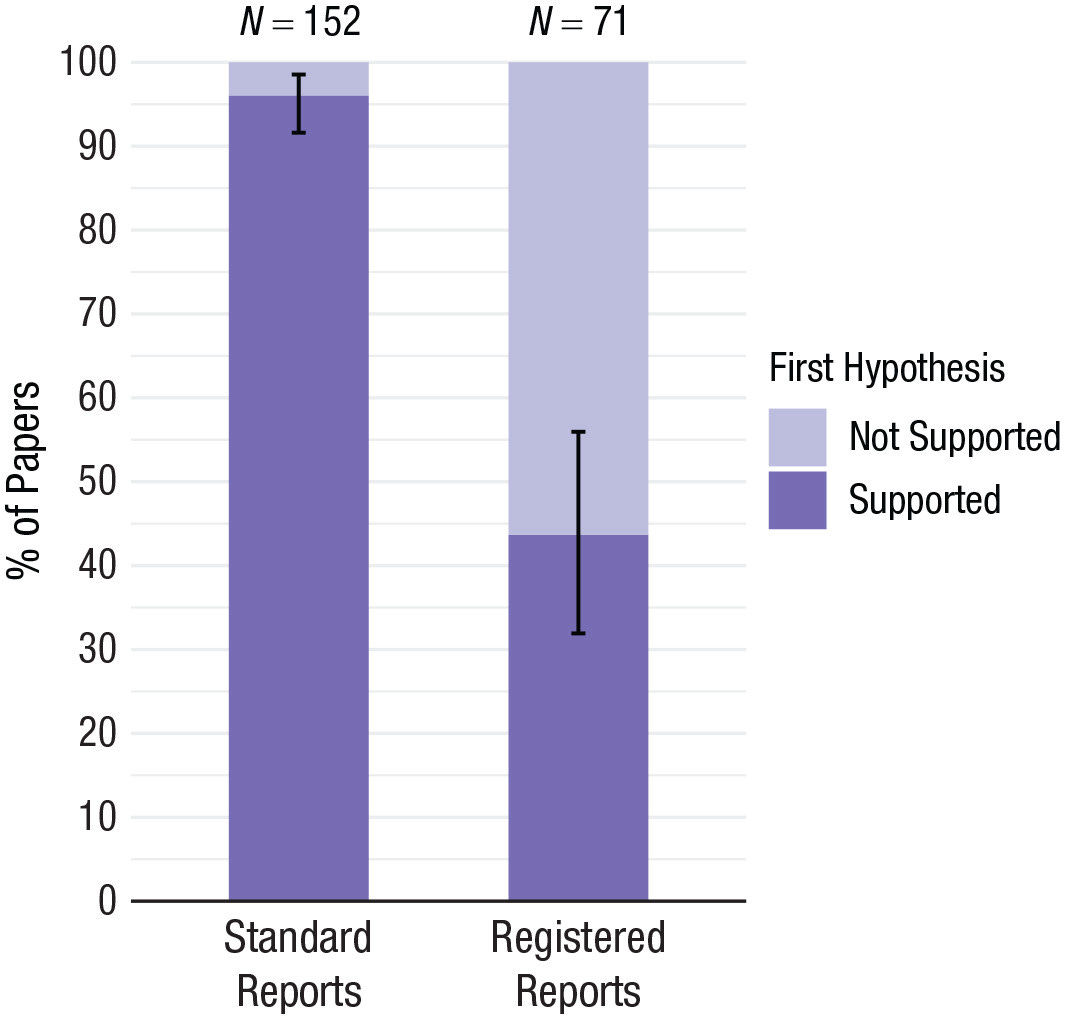

Publication bias adjustment methods can save some of the existing literature, but it’s better yet to ensure that future research won’t need saving. We can combat publication bias by realizing that the problem lies in how publication decisions depend on the outcome of the study. By making the two independent most publication bias will vanish. This is what registered reports do. Here, the submission of an academic article involves two steps. Before data collection or analysis, researchers submit a study protocol that outlines the research project, including research questions, experiments, and proposed analyses. Decisions about publications are made based on this protocol so that if it is accepted the study gets published no matter the results. This means that the study gets published regardless of the results and so publication bias is largely avoided. Registered reports are already implemented and accepted by journals. Figure 4, from this study, shows that registered reports find substantially fewer effects. As expected, we see a drop from around 99% in “normal studies” to 40% in registered reports.

The examples given here across disciplines should make it clear that publication bias is a looming problem across science. Thus, I encourage researchers and anyone who relies on scientific evidence to take this epistemic threat to our collective knowledge seriously. There are ways of fixing the problem.

Let’s use them.

Adam Finnemann is a PhD candidate at the University of Amsterdam where he is affiliated with the Psychological Methods Group and the Centre for Urban Mental Health. His main interest is applying ideas from complexity science to the field of psychology, particularly to study how cities shape our psychological lives.

If you liked this article, you might also like Adam’s previous post on the problems with peer review.